Research Topics

Audio-Visual Multimodal Learning

Human perception is inherently multimodal, integrating sight, sound, and other senses to create a rich and unified experience of the world. This combination allows us to recognize objects, understand their relationships, and interpret details like color, shape, material properties, location, and movement. Visual cues help us identify and locate objects, while auditory cues fill in gaps by providing depth, spatial awareness, and motion details. However, most current systems handle audio and visual information separately, limiting their capacity to mimic human-like perception. Our work in multimodal learning aims to bridge this gap by integrating both audio and visual inputs across various tasks, such as audio-visual classification, event localization, representation learning, audio synthesis, and depth perception. We emphasize learning under weak supervision, enabling methods to perform well with minimal labeled data, thus reducing the dependency on extensive manual annotations while using the complementary nature of audio and visual data better.

Related Publications:

- Kranthi Kumar Rachavarapu, Kalyan Ramakrishnan, A.N. Rajagopalan. "Weakly-supervised audio-visual video parsing with prototype-based pseudo-labeling". IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , Seattle, USA, June 2024.

- Kranthi Kumar Rachavarapu, A.N. Rajagopalan, "Boosting Positive Segments for Weakly-Supervised Audio-Visual Video Parsing," IEEE International Conference on Computer Vision (ICCV), Paris, France, October 2023.

- Kranthi Kumar Rachavarapu, Aakanksha, Vignesh Sundaresha, A.N. Rajagopalan, "Localize to Binauralize: Audio Spatialization from Visual Sound Source Localization," IEEE International Conference on Computer Vision (ICCV), Virtual, October 2021.

Underwater Depth Estimation and Restoration

Underwater (UW) depth estimation and image restoration is a challenging task due to its fundamental ill-posedness and the unavailability of real large-scale UW paired datasets. We propose a self-supervised method that utilizes only the input UW images for training to simultaneously output the depth map and the restored image in real-time. UW depth estimation has been attempted before by utilizing either the haze information present or the geometry cue from stereo images or the adjacent frames in a video. To obtain improved estimates of depth from a single UW image, we propose our method, which utilizes both haze and geometry during training. By harnessing the physical model for UW image formation in conjunction with the view-synthesis constraint on neighboring frames in monocular videos, we perform disentanglement of the input image to also get an estimate of the scene radiance. To facilitate monocular self-supervision, we collected a Dataset of Real-world Underwater Videos of Artifacts (DRUVA) in shallow sea waters. DRUVA is the first UW video dataset that contains video sequences of 20 different submerged artifacts with almost full azimuthal coverage of each artifact. The dataset DRUVA is available at https://github.com/nishavarghese15/DRUVA.

To alleviate the limitations of prior-based (struggle when there is a mismatch between the adopted prior and actual scene conditions) and data-driven (require large-scale dataset for training) UW restoration methods, we propose a "zero-shot" method (only the input image will be used for training and testing) by harnessing the physical model for UW image formation. A re-degradation strategy is introduced to generate another UW image that respects the same image formation model. The network is optimized to disentangle the input UW image in such a manner that the relationships between the components of the input UW image and the re-degraded image are satisfied. A contrastive learning strategy is added that ensures that the restored image is pulled closer to a clean image and pushed far away from the UW image in the representation space.

Most of the UW image restoration and depth estimation methods have been devised for images under normal lighting. Consequently, they struggle to perform on poorly lit images. Even though artificial illumination can be used when there is insufficient ambient light, it can introduce non-uniform lighting artifacts in the restored images. Hence, the recovery of depth and restored images directly from Low-Light UW (LLUW) images is a critical requirement in marine applications. While a few works have attempted LLUW image restoration, there are no reported works on joint recovery of depth and clean image from LLUW images. We propose a Self-supervised Low-light Underwater Image and Depth recovery network (SelfLUID-Net) for joint estimation of depth and restored image in real-time from a single LLUW image. We have collected an Underwater Low-light Stereo Video (ULVStereo) dataset which is the first-ever UW dataset with stereo pairs of low-light and normally-lit UW images. For the dual tasks of image and depth recovery from a LLUW image, we effectively utilize the stereo data from ULVStereo that provides cues for both depth and illumination-independent clean image. We harness a combination of the UW image formation process, the Retinex model, and constraints enforced by the scene geometry for our self-supervised training. To handle occlusions, we additionally utilize monocular frames from our video dataset and propose a masking scheme to prevent dynamic transients, that do not respect the underlying scene geometry, from misguiding the learning process. Evaluations on five LLUW datasets demonstrate the superiority and generalization ability of our proposed SelfLUID-Net over existing state-of-the-art methods.

- Nisha Varghese, A.N. Rajagopalan. "Sea-ing in Low-light," IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2025.

- Nisha Varghese, Ashish Kumar, A.N. Rajagopalan. "Self-supervised monocular underwater depth recovery, image restoration, and a real-sea video dataset". Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) , 2023.

- Nisha Varghese, A.N. Rajagopalan. "Re-Degradation and Contrastive Learning for Zero-shot Underwater Image Restoration.," The 34th British Machine Vision Conference 2023.

Dynamic Motion-aware Deblurring Neural Radiance Fields

Neural Radiance Fields (NeRFs) have made significant advances in rendering novel photorealistic views for both static and dynamic scenes. However, most prior works assume ideal conditions of artifact-free visual inputs i.e., images and videos. In real scenarios, artifacts such as object motion blur, camera motion blur, or lens defocus blur are ubiquitous. Some recent studies have explored novel view synthesis using blurred input frames by examining either camera motion blur, defocus blur, or both. However, these studies are limited to static scenes. In this work, we enable NeRFs to deal with object motion blur whose local nature stems from the interplay between object velocity and camera exposure time. Often, the object motion is unknown and time varying, and this adds to the complexity of scene reconstruction. Sports videos are a prime example of how rapid object motion can significantly degrade video quality for static cameras by introducing motion blur. We present an approach for realizing motion blur-free novel views of dynamic scenes from input videos with object motion blur captured from static cameras spanning multiple poses. We propose a NeRF-based analytical framework that elegantly correlates object three-dimensional (3D) motion across views as well as time to the observed blurry videos. Our proposed method DynaMoDe-NeRF (Dynamic Motion-aware Deblurring NeRF) is self-supervised and reconstructs the dynamic $3D$ scene, renders sharp novel views by blind deblurring, and recovers the underlying 3D motion and blur parameters. We provide comprehensive experimental analysis on synthetic and real data to validate our approach. To the best of our knowledge, this is the first work to address localized object motion blur in the NeRF domain.

Related Publications:

- Ashish Kumar, A.N. Rajagopalan. "DynaMoDe-NeRF: Motion-aware Deblurring Neural Radiance Field for Dynamic Scenes". IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , Nashville, USA, June 2025.

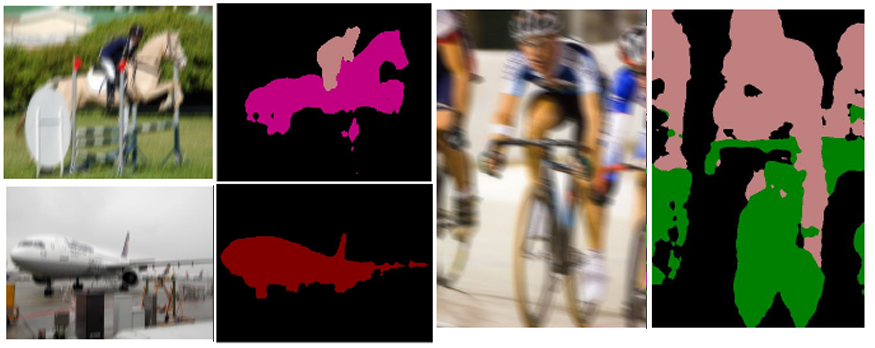

Class-centric Augmentation for Semantic Segmentation from Motion-blurred Images

Semantic segmentation involves classifying each pixel into one of a pre-defined set of object/stuff classes. Such a fine-grained detection and localization of objects in the scene is challenging by itself. The complexity increases manifold in the presence of blur. With cameras becoming increasingly light-weight and compact, blur caused by motion during capture time has become unavoidable. Most research has focused on improving segmentation performance for sharp clean images and the few works that deal with degradations, consider motion-blur as one of many generic degradations. In this work, we focus exclusively on motion-blur and attempt to achieve robustness for semantic segmentation in its presence. Based on the observation that segmentation annotations can be used to generate synthetic space-variant blur, we propose a Class-Centric Motion-Blur Augmentation (CCMBA) strategy. Our approach involves randomly selecting a subset of semantic classes present in the image and using the segmentation map annotations to blur only the corresponding regions. This enables the network to simultaneously learn semantic segmentation for clean images, images with egomotion blur, as well as images with dynamic scene blur. We demonstrate the effectiveness of our approach for both CNN and Vision Transformer-based semantic segmentation networks on PASCAL VOC and Cityscapes datasets. We also illustrate the improved generalizability of our method to complex real-world blur by evaluating on the commonly used deblurring datasets GoPro and REDS.

Related Publications:

- Aakanksha and A.N. Rajagopalan. "Improving Robustness of Semantic Segmentation to Motion-Blur Using Class-Centric Augmentation". IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , 2023.

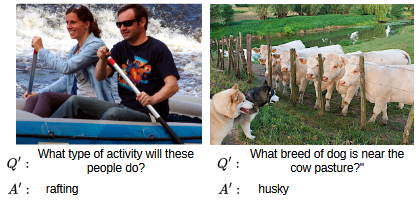

Semi-Supervised Implicit Augmentation for Data-Scarce VQA

Vision-language models (VLMs) have demonstrated increasing potency in solving complex vision-language tasks in the recent past. Visual question answering (VQA) is one of the primary downstream tasks for assessing the capability of VLMs, as it helps in gauging the multimodal understanding of a VLM in answering open-ended questions. The vast contextual information learned during the pretraining stage in VLMs can be utilised effectively to finetune the VQA model for specific datasets. In particular, special types of VQA datasets, such as OK-VQA, A-OKVQA (outside knowledge-based), and ArtVQA (domain-specific), have a relatively smaller number of images and corresponding question-answer annotations in the training set. Such datasets can be categorised as data-scarce. This hinders the effective learning of VLMs due to the low information availability. We introduce SemIAug (Semi-Supervised Implicit Augmentation), a model and dataset agnostic strategy specially designed to address the challenges faced by limited data availability in the domain-specific VQA datasets. SemIAug uses the annotated image-question data present within the chosen dataset and augments it with meaningful new image-question associations. We show that SemIAug improves the VQA performance on data-scarce datasets without the need for additional data or labels..

Related Publications:

- Bhargav Dodla*, Kartik Hegde*, and A. N. Rajagopalan. "Semi-Supervised Implicit Augmentation for Data-Scarce VQA". Computer Sciences & Mathematics Forum. 2024

Motion deblurring

Motion-blurred images form due to relative motion during sensor exposure and are favored by photographers and artists in many cases for aesthetic purpose, but seldom by computer vision researchers, as many standard vision tools including detectors, trackers, and feature extractors struggle to deal with blur. Traditional methods for blind motion deblurring typically necessitate the use of natural image priors. These hand-crafted priors struggle to generalize across different real-world examples. Also, their high-dimensional optimization necessitates higher processing times. Deep convolutional neural network (CNN) models have become efficient in modeling highly complex space variant blurs. Their processing time is comparatively much less than traditional blind methods since these methods typically require only a single pass over the network during inference. We propose an efficient deblurring design built on new convolutional modules that learn the transformation of features using global attention and adaptive local filters. We show that these two branches complement each other and result in superior deblurring performance. For video deblurring, we introduce a factorized spatio-temporal attention as an effective non-local information fusion tool. Our work is the first to present an approach for finding the key frames with the most relevant information for video deblurring. It significantly boosts the restoration performance when coupled with the proposed attention module. We also propose an efficient motion deblurring architecture built using dense deformable modules that facilitate position-specific dynamic filter transformation. For dual lens cameras, we address motion deblurring in unconstrained camera configurations. For gimbal based imaging system, we propose a motion-deblurring work to address large motion blur. We have collected a unique dataset (GYRO) which, to the best of our knowledge, is the first-ever dataset containing real blurred images captured by a camera mounted on a gimbal setup with rotational yaw motion. GYRO contains inertial measurements of the camera also. GYRO is available in GitHub - nishavarghese15/GYRO.

Related Publications:

- Maitreya Suin, A. N. Rajagopalan, "Gated Spatio-Temporal Attention-Guided Video Deblurring". IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , June 2021.

- Maitreya Suin*, Kuldeep Purohit* and A. N. Rajagopalan, "Spatially-Attentive Patch-Hierarchical Network for Adaptive Motion Deblurring," IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , June 2020.

- Mahesh Mohan M. R., G.K. Nithin, A. N. Rajagopalan, "Deep dynamic scene deblurring for unconstrained dual-lens cameras," IEEE Transactions on Image Processing, vol. 30, pp. 4479-4491, 2021.

- Nisha Varghese, A. N. Rajagopalan, Zahir Ahmed Ansari, "Real-time Large-motion Deblurring for Gimbal-based imaging systems," IEEE Journal of Selected Topics in Signal Processing, vol. 18, no. 3, pp. 346-357, April 2024.

Low-light enhancement

Low-light images suffer from poor visibility due to a small number of photons hitting the image sensor, high noise due to camera ISO, and poor contrast. Given a low-light image, the objective of low-light enhancement is to improve the overall aesthetic quality of the image. Along with human visibility preference, low-light enhancement is indispensable for numerous computer vision tasks like object recognition, segmentation, remote surveillance, and many others. Supervised networks address the task of low-light enhancement using paired images. However, collecting a wide variety of low-light/clean paired images is tedious as the scene needs to remain static during imaging. We design a two-stage unsupervised framework for enhancing low-light images. The first stage improves the overall visibility using a pixel-wise amplification module, and the second stage further enhances the image using context-adaptive illumination-guided norm (CIN). We suggest a region-adaptive single-input-multiple-output model (SIMO) that can generate multiple enhanced images from a single low-light image. Unlike existing works that generate a single enhanced image, SIMO lets the users choose the image of their liking from a pool of enhanced images. This is specifically relevant for image enhancement, as one of the main applications of image enhancement is human visibility preference, where there cannot be a single objective solution. We propose a low-light road scene dataset (LLRS), an unpaired set of low-light and clear road scenes.

Related Publications:

- Praveen Kandula, Maitreya Suin and A. N. Rajagopalan, "Illumination-Adaptive Unpaired Low-Light Enhancement". IEEE Transactions on Circuits and Systems for Video Technology, vol. 33, no. 8, pp. 3726-3736, Aug. 2023.

Generative Models for Localized Style Manipulation and segmentation of Face Images

With the metaverse slowly becoming a reality and given the rapid pace of developments toward the creation of digital humans, the need for a principled style editing pipeline for human faces is bound to increase manifold. With the rise of online photo-sharing applications, the demand for tools enabling automatic photorealistic edits on face images has grown exponentially. Motivated by the capability of Generative Adversarial Networks (GANs) to generate high-resolution and photorealistic images and transfer the style attributes from an image to another, several GAN-based approaches. However, there exists a paucity of methods that can efficiently perform highly localized, structure-preserving, and photorealistic style edits on real images which are in accordance with the global style scheme. A few methods addressed local style editing of real images, but their framework is dependent on the highly expensive process and thus inconvenient for real-life applications. We seek to diminish the aforesaid research gap in this work. The latent space of Generative Autoencoder (GA) models can be designed to encode the structure and style information present in the input image into separate representations. Our key insight is that achieving a strong correspondence between a part of the structure representation and semantic ROIs in a disentangled fashion is pivotal for solving the problem. To this end, we design a framework where we slice structure representation such that each slice represents (gets interpreted as) all the information required by the decoder to decode each ROI independently. The style representation can then be leveraged to affect global style changes onto the input image, and the sliced structure representation can be leveraged to produce semantic segmentation masks for each ROI. Consequentially, obtaining ROI-specific style edits shall amount to a simple alpha matting task with the predicted semantic segmentation masks as the matte. Furthermore, our design is based on Swapping Autoencoder (SAE) that learns a latent representation with a neat distinction between structure and style information of the input image. Our design is significantly faster, as it inverts using a single forward pass and converges to areas of the latent space, which are more suitable for editing. We harness the disentangled nature of our model’s latent space to perform the challenging downstream task of semantic face segmentation.

Related Publications:

- Snehal Singh Tomar and A.N. Rajagopalan, "Latents2Segments: Disentangling the Latent Space of Generative Models for Semantic Segmentation of Face Images". CVPR Workshop on Computer Vision for Augmented and Virtual Reality, New Orleans, LA 2022.

- Snehal Singh Tomar, Maitreya Suin , and A.N. Rajagopalan, "Exploring the Effectiveness of Mask-Guided Feature Modulation as a Mechanism for Localized Style Editing of Real Images (Student Abstract)". 37 th AAAI Conference on Artificial Intelligence 2023.

- Snehal Singh Tomar, and A.N. Rajagopalan , " Latents2Semantics: Leveraging the Latent Space of Generative Models for Localized Style Manipulation of Face Images". 38 th AAAI Conference on Artificial Intelligence 2024 - Workshop on AI for Digital Human.

© 2024 IPCVLab -All rights reserved